It is known that Apple you care a lot about the confidentiality of the data that passes through the devices in the ecosystem and that they are very well protected from prying eyes. However, this does not mean that users should not obey rules related to ethics, morality and, why not, humanity.

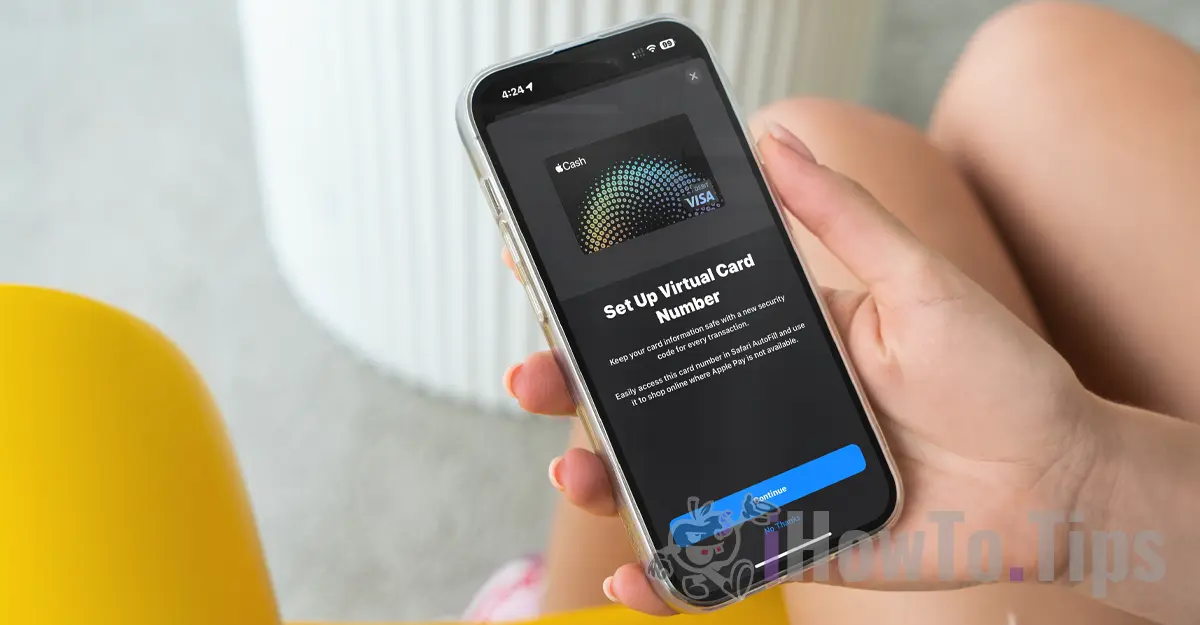

Apple has announced that it will soon launch a technology capable of scanning all images stored by users in iCloud and to identify photos that contain sexual abuse against children. Child Sexual Abuse Material (CSAM).

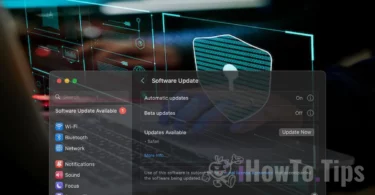

This technology will be launched by Apple towards the end of this year, most likely in a pilot version, for a small number of users.

If you are wondering "where is the data confidentiality?", Find out that everything we receive content video / photo it is done through an encrypted protocol. So is storing data in iCloud it is encrypted, but the providers of such services have the key to encrypt the data. Here we say not only of Apple. Microsoft, Google and other data storage services in cloud I do the same thing.

The stored data can be decrypted and verified if they are requested in a legal action or if they flagrantly violate laws. Such as those concerning acts of violence against children and women.

To keep users confidential, the company relies on a new technology called NeuralHash, which will scan photos from iCloud and will identify those that contain violence against children by comparing them with other images in a database.

It is somewhat similar to facial recognition or intelligent recognition of objects, animals and other elements.

The technology will be able to scan and verify the pictures that are taken or transferred through an iPhone, iPad or Mac device, even if they will undergo changes.